Publications

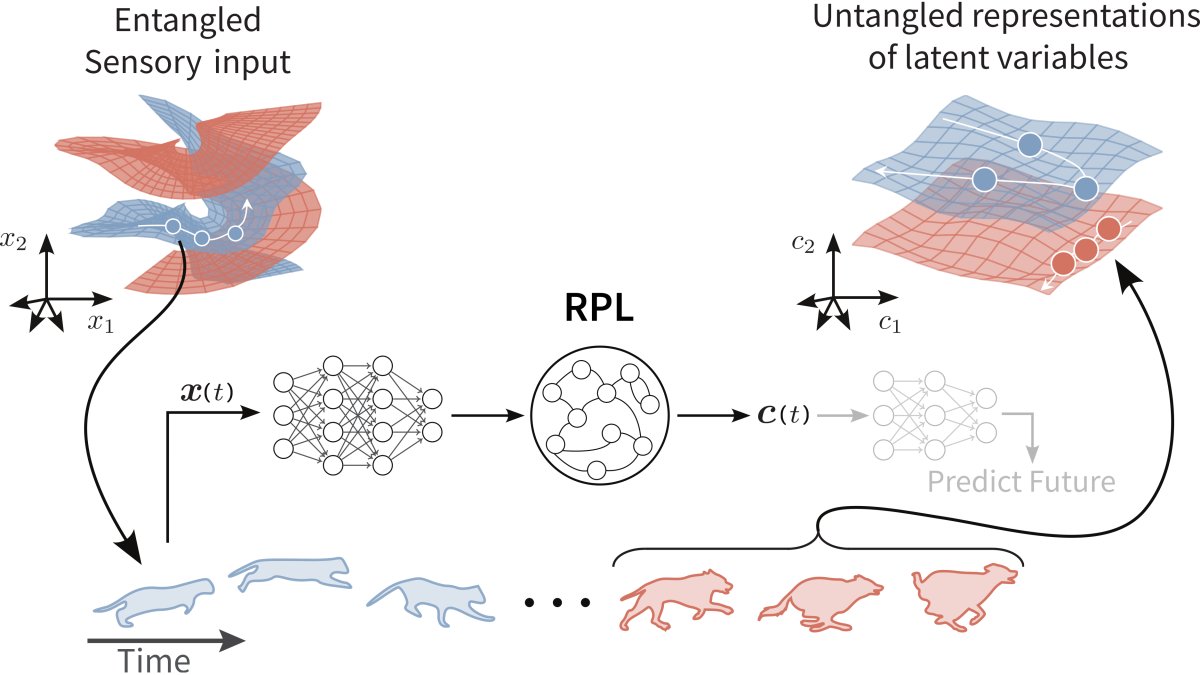

Understanding cortical computation through the lens of joint-embedding predictive architectures

Mohammadi, A.G.*, Halvagal, M. S.*, & Zenke, F.

bioRxiv 2026

Mohammadi, A.G.*, Halvagal, M. S.*, & Zenke, F.

bioRxiv 2026

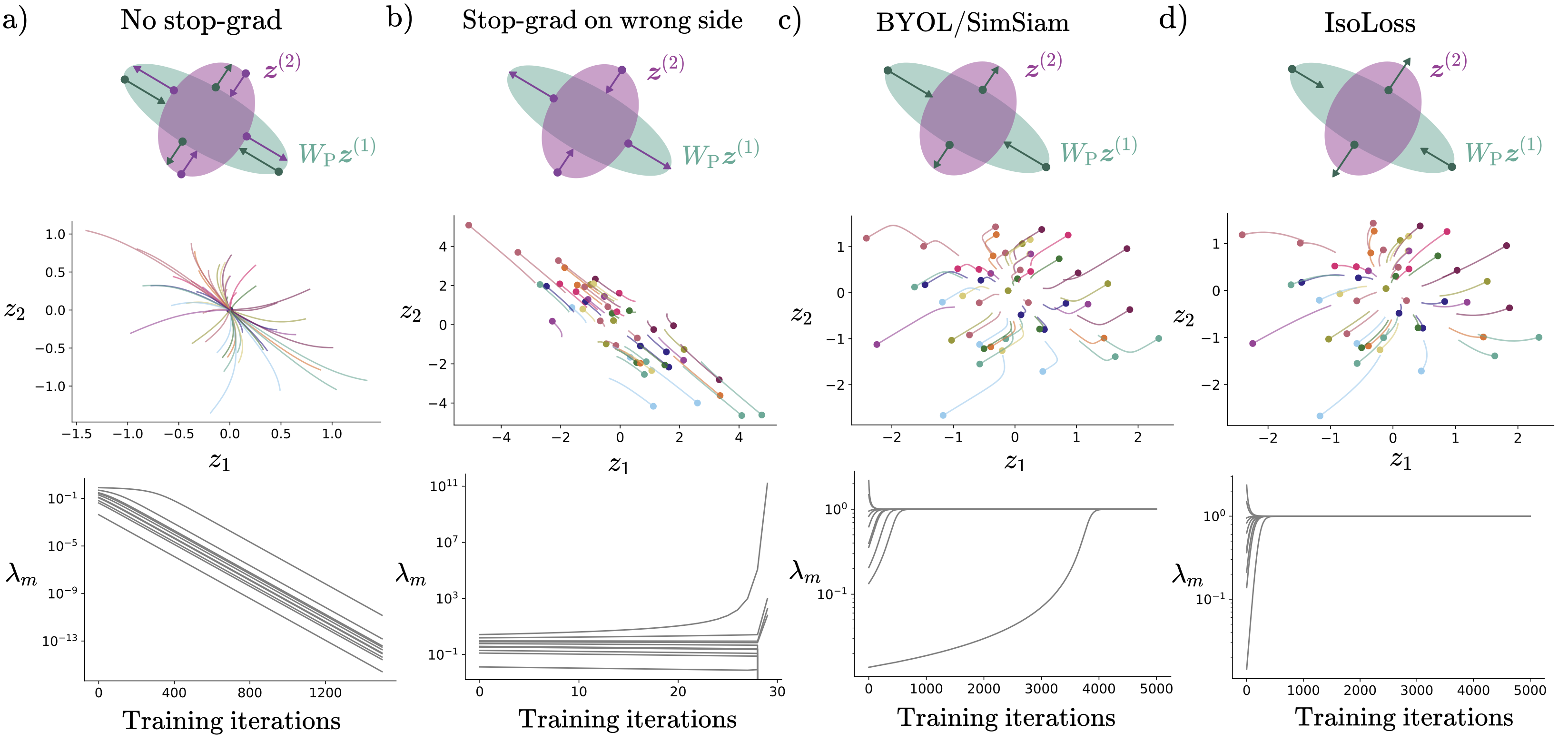

Implicit variance regularization in non-contrastive SSL

Halvagal, M. S.*, Laborieux, A.*, & Zenke, F.

Advances in Neural Information Processing Systems 36 (NeurIPS 2023)

Halvagal, M. S.*, Laborieux, A.*, & Zenke, F.

Advances in Neural Information Processing Systems 36 (NeurIPS 2023)

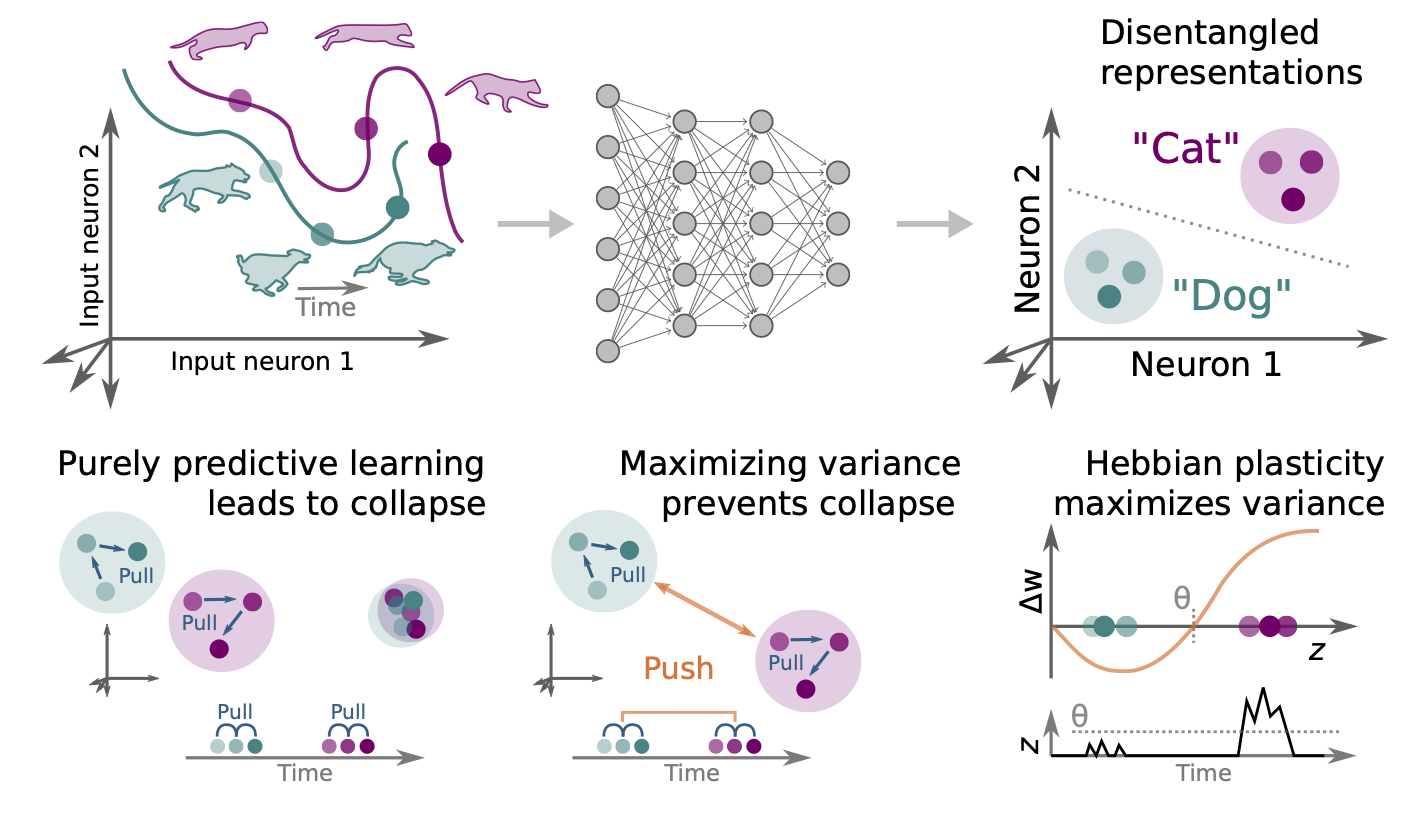

The combination of Hebbian and predictive plasticity learns invariant object representations in deep sensory networks

Halvagal, M. S., & Zenke, F.

Nature Neuroscience

Halvagal, M. S., & Zenke, F.

Nature Neuroscience

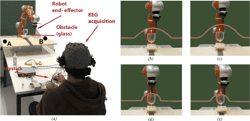

Inferring subjective preferences on robot trajectories using EEG signals

Iwane, F., Halvagal, M. S., Iturrate, I., Batzianoulis, I., Chavarriaga, R., Billard, A., & Millán, J. D. R.

2019 9th International IEEE/EMBS Conference on Neural Engineering (NER)

Iwane, F., Halvagal, M. S., Iturrate, I., Batzianoulis, I., Chavarriaga, R., Billard, A., & Millán, J. D. R.

2019 9th International IEEE/EMBS Conference on Neural Engineering (NER)

( paper )